Backpropagation and Automatic Differentiation

#

Backpropagation applies the chain rule:

- efficiently across a computational graph.

- repeatedly.

Chain rule:

[

\frac{dL}{dx} = \frac{dL}{dy} \cdot \frac{dy}{dx}

]

flowchart LR

x --> y

y --> L

Automatic differentiation computes exact derivatives efficiently using computational graphs.

Home | Vector Calculus

Higher-order derivatives

#

Home | Vector Calculus

Angles and Orthogonality

#

Once we define an inner product, we can define the angle between two vectors.

Angles allow us to measure how aligned or different two vectors are in space.

Key Idea:

Angle measures similarity between vectors.

Orthogonality means complete independence (no similarity).

Why It Matters in Machine Learning

#

- PCA produces orthogonal components

- Orthogonal features reduce redundancy

- Gradient directions depend on angle

For vectors in n-dimensional space:

Linearization and multivariate Taylor’s series

#

Home | Vector Calculus

Computing maxima and minima for unconstrained optimization

#

Home | Vector Calculus

July 4, 2024

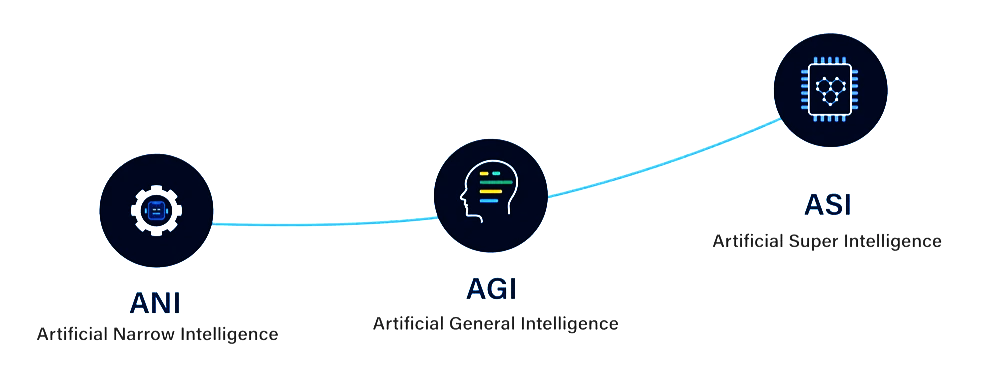

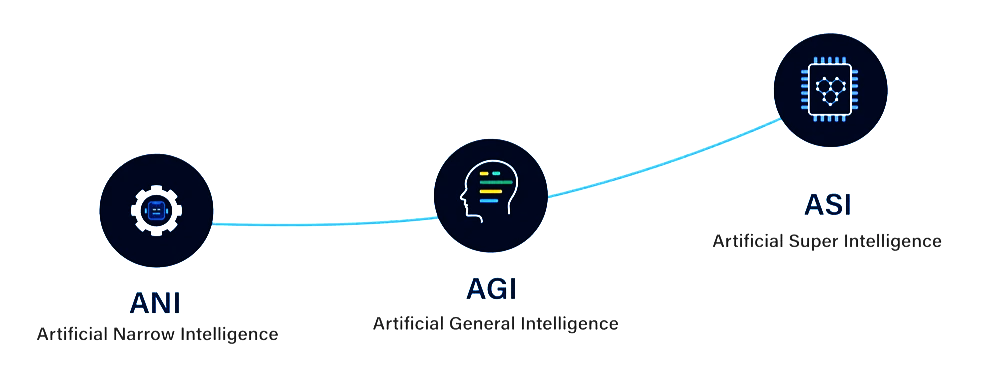

July 4, 2024AI Development Stages: ANI → AGI → ASI

#

Artificial Intelligence is often described in three stages, based on capability and scope:

- ANI: Task-specific intelligence (today’s AI)

- AGI: Human-level general intelligence (future goal)

- ASI: Beyond human intelligence (theoretical)

ANI — Artificial Narrow Intelligence

#

- also called Weak AI

- designed to perform one specific task

- Operates within a predefined environment

- Cannot generalise beyond its training

- Most AI systems today are ANI

examples

Neural Networks

#

- A network of artificial neurons inspired by how neurons function in the human brain.

- At its core - a mathematical model designed to process and learn from data.

- Neural networks form the foundation of Deep Learning (involves training large and complex networks on vast amounts of data).

flowchart LR

subgraph subGraph0["Input Layer"]

I1(("Input 1"))

I2(("Input 2"))

I3(("Input 3"))

end

subgraph subGraph1["Hidden Layer"]

H1(("Hidden 1"))

H2(("Hidden 2"))

H3(("Hidden 3"))

end

subgraph subGraph2["Output Layer"]

O(("Output"))

end

I1 --> H1 & H2 & H3

I2 --> H1 & H2 & H3

I3 --> H1 & H2 & H3

H1 --> O

H2 --> O

H3 --> O

style I1 fill:#C8E6C9

style I2 fill:#C8E6C9

style I3 fill:#C8E6C9

style H1 stroke:#2962FF,fill:#BBDEFB

style H2 fill:#BBDEFB

style H3 fill:#BBDEFB

style O fill:#FFCDD2

style subGraph0 stroke:none,fill:transparent

style subGraph1 stroke:none,fill:transparent

style subGraph2 stroke:none,fill:transparent

Structure of a Neural Network

#

A typical neural network has three main layers:

August 6, 2024

August 6, 2024Machine Learning

#

stateDiagram-v2

%% ===== CLASS DEFINITIONS (Math-based colours) =====

classDef algebra fill:#cfe8ff,stroke:#1e3a8a,stroke-width:1px

classDef probability fill:#d1fae5,stroke:#065f46,stroke-width:1px

classDef geometry fill:#ffedd5,stroke:#9a3412,stroke-width:1px

classDef logic fill:#ede9fe,stroke:#5b21b6,stroke-width:1px

classDef category font-style:italic,font-weight:bold,fill:#aaaaaa,stroke:#374151,stroke-width:3px

%% ===== ROOT =====

ML: Machine Learning

%% ===== SUPERVISED =====

ML --> SL:::category

SL: Supervised Learning

SL --> Regression

Regression --> LR:::algebra

LR: Linear Regression

LR --> NN:::algebra

NN: Neural Network

NN --> DT:::logic

DT: Decision Tree

SL --> Classification

Classification --> NB:::probability

NB: Naive Bayes

NB --> KNN:::geometry

KNN: k-Nearest Neighbours

KNN --> SVM:::algebra

SVM: Support Vector Machine

%% ===== UNSUPERVISED =====

ML --> USL:::category

USL: Unsupervised Learning

USL --> Clustering

Clustering --> KM:::geometry

KM: K-Means

KM --> GMM:::probability

GMM: Gaussian Mixture Model

GMM --> HMM:::probability

HMM: Hidden Markov Model

%% ===== REINFORCEMENT =====

ML --> RL:::category

RL: Reinforcement Learning

RL --> DM:::logic

DM: Decision Making

Mathematical Legend

Algebra / Linear Algebra (Blue)

#

Used heavily when models rely on:

Artificial Neuron and Perceptron

#

knowledge in neural networks is stored in connection weights, and learning means modifying those weights.

Biological Neuron

#

A biological neuron is a specialised cell that processes and transmits information through electrical and chemical signals.

Core components:

- Dendrites: receive signals from other neurons

- Cell body (soma): processes incoming signals

- Axon: transmits the output signal

- Synapses: connection points between neurons

Biological intuition:

- many inputs arrive to one neuron

- one neuron can connect out to many neurons

- massive parallelism enables fast perception and recognition

Artificial Neuron

#

An artificial neuron is a simplified computational model inspired by biological neurons.

Machine learning Workflow

#

Data is the foundation of any machine learning system.

Quality of data matters more than model complexity.

Role of Data

#

Data determines:

- What patterns the model can learn

- How well it generalises

- Whether bias or noise is introduced

Bad data → bad model (even with perfect algorithms).

Data Preprocessing, wrangling

#

Raw data is never ready for training.

Data Issues

- Noise

- For objects, noise is an extraneous object

- For attributes, noise refers to modification of original values

- Use Log or Z Transfer to convert to mean

- Outliers

- Data objects with characteristics that are considerably different than most of the other data objects in the data set

- Handle: Use IQR method

- Find Lower and Upper Bound and replace Outlier with Lower or Upper Bound

- Missing Values

- Eliminate data objects or variables

- Handle: Estimate missing values

- Mean, Median or Mode

- Prefer Median if there are missing outliers

- Ignore the missing value during analysis

- Duplicate Data

- Major issue when merging data from heterogeneous sources

- Inconsistent Codes

- Find all Unique and transfer all inconsistent to

Data Preprocessing techniques