Basis and Rank

# A basis is a minimal set of linearly independent vectors that spans a space.

The dimension of a space is the number of vectors in a basis.

Key Idea:

Basis = independence + spanning.

Rank tells us how many independent directions exist in a matrix.

A basis must satisfy two conditions ⭐

Vectors must be linearly independent Vectors must span the space This means:

No redundancy (independence) Full coverage (spanning) \[

\text{Span}(v_1, v_2, \dots, v_k) = V

\]

\[

c_1 v_1 + \cdots + c_k v_k = 0 \Rightarrow c_i = 0

\] Why Basis Matters

# Represents space efficiently Removes redundancy Helps define coordinates Used in ML for feature representation Dimension

# Dimension is the number of vectors in a basis.

Linear Independence

# A set of vectors is linearly independent if none of them can be written as a linear combination of the others.

\[

c_1\mathbf{v}_1 + \cdots + c_k\mathbf{v}_k = \mathbf{0}

\;\Rightarrow\;

c_1=\cdots=c_k=0

\] Independence means each vector adds new information .

Norm

# A norm measures the length (magnitude) of a vector.

the norm of a vector x measures the distance from the origin to the point x. Common example: Euclidean norm.

\[

\lVert \mathbf{x} \rVert_2 = \sqrt{x_1^2 + \cdots + x_n^2}

\] Key Idea:

Norm = measure of size or length of a vector.

It generalises the idea of distance in geometry to higher dimensions.

Inner Products and Dot Product

# An inner product maps two vectors to a single scalar .

It allows us to measure:

similarity vector length projections orthogonality

flowchart TD

T["Inner<br/>products<br/>(types)"] --> DOT["Euclidean<br/>Dot product"]

T --> WIP["Weighted<br/>inner product"]

T --> FN["Function-space<br/>(integral)"]

T --> HERM["Complex<br/>Hermitian"]

T --> MAT["Matrix<br/>inner product<br/>(Frobenius)"]

DOT --> Rn["Vectors in<br/>

<span>

\( \mathbb{R}^n \)

</span>

"]

WIP --> SPD["SPD matrix<br/>W"]

FN --> L2["L2 space<br/>functions"]

HERM --> Cn["Vectors in<br/>C^n"]

MAT --> Mnm["Matrices<br/>R^{m×n}"]

style T fill:#90CAF9,stroke:#1E88E5,color:#000

style DOT fill:#C8E6C9,stroke:#2E7D32,color:#000

style WIP fill:#C8E6C9,stroke:#2E7D32,color:#000

style FN fill:#C8E6C9,stroke:#2E7D32,color:#000

style HERM fill:#C8E6C9,stroke:#2E7D32,color:#000

style MAT fill:#C8E6C9,stroke:#2E7D32,color:#000

style Rn fill:#CE93D8,stroke:#8E24AA,color:#000

style SPD fill:#CE93D8,stroke:#8E24AA,color:#000

style L2 fill:#CE93D8,stroke:#8E24AA,color:#000

style Cn fill:#CE93D8,stroke:#8E24AA,color:#000

style Mnm fill:#CE93D8,stroke:#8E24AA,color:#000

Definition

# For vectors\( \mathbf{a}, \mathbf{b} \in \mathbb{R}^n \)

Lengths and Distances

# The length of a vector is given by its norm.

The distance between two points (vectors) is the norm of their difference.

Distance quantifies how far two vectors (data points) are from each other.

\[

d(\mathbf{x},\mathbf{y}) = \lVert \mathbf{x} - \mathbf{y} \rVert

\] Key Idea:

Length measures size of a single vector.

Distance measures separation between two vectors.

Distance = norm applied to difference.

Angles and Orthogonality

# Once we define an inner product, we can define the angle between two vectors .

Angles allow us to measure how aligned or different two vectors are in space.

Key Idea:

Angle measures similarity between vectors.

Orthogonality means complete independence (no similarity).

Why It Matters in Machine Learning

# PCA produces orthogonal components Orthogonal features reduce redundancy Gradient directions depend on angle For vectors in n-dimensional space:

Orthonormal Basis

# A basis is orthonormal if its vectors are:

orthogonal to each other each has unit length \[

\langle \mathbf{e}_i, \mathbf{e}_j \rangle =

\begin{cases}

1 & i=j \\

0 & i\ne j

\end{cases}

\] Key Idea:

Orthonormal basis = perfectly independent + perfectly scaled.

This makes computations extremely simple and stable.

March 18, 2026

Mathematical Foundations for Machine Learning

# Machine Learning is built on mathematical principles that allow models to:

represent data learn patterns optimise performance

flowchart LR

DATA[Data]

MATH[Math Models]

OPT[Optimisation]

MODEL[Trained Model]

DATA --> MATH

MATH --> OPT

OPT --> MODEL

ML requires core mathematical tools to understand how ML algorithms work internally. Algebra deals with relationships between variables and quantities, while Calculus focuses on change and optimization.

March 18, 2026

Linear Algebra

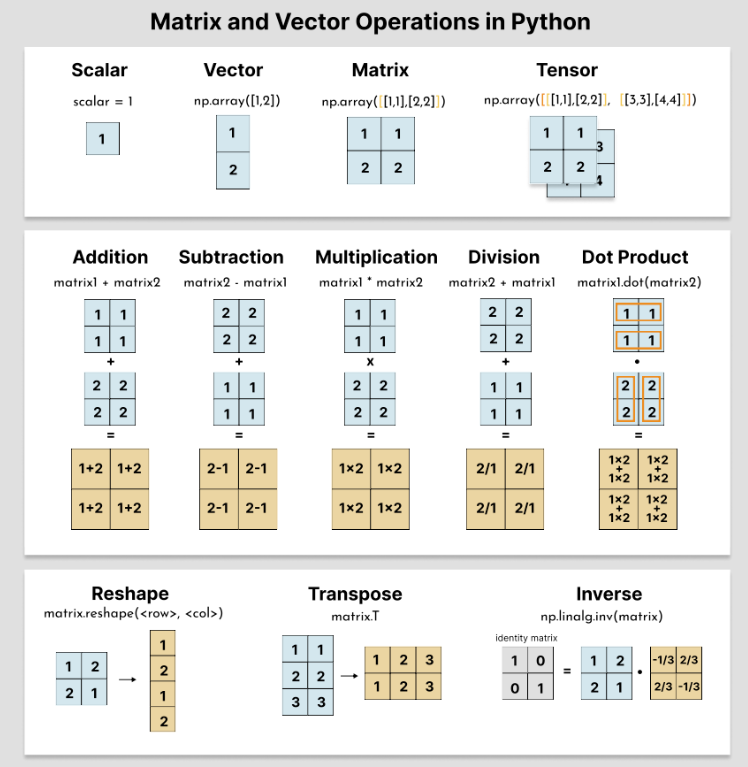

# The study of vectors and matrices is called Linear Algebra.

Linear Algebra provides the mathematical language used to represent data, transformations, and structure in ML.

Why Linear Algebra Matters in ML

# Every machine learning model uses matrices All data in ML is represented using vectors and matrices Neural networks are pipelines of matrix operations Models apply matrix transformations to data Optimisation relies on linear algebra operations What to Learn

# Scalars, vectors, and matrices Vector operations (addition, dot product) Matrix multiplication (critical) Identity matrices and transpose Eigenvalues and eigenvectors (conceptual understanding) Scalar → a numberVector → a directed pointMatrix → a space transformerLinear transformation → structured mappingFeature → one axisFeature space → where data livesVector space → where vectors liveHome | Mathematical Foundation

January 29, 2026

Linear Systems

# How systems of linear equations are represented and solved using matrices.

the study of vectors and rules to manipulate

vectors describe multiple linear equations solved simultaneously connect algebraic equations with matrix representations

Idea of Closure

# performing a specific operation (like addition or multiplication) on members of a set always produces a result that belongs to the same set

idea of closure is fundamental to defining a Vector space