Machine Learning

Naïve Bayes

Naïve Bayes #

Naïve Bayes is a probabilistic classifier.

- Supervised Learning Problem

- Binary Classification - final target variable is considered in two classes

- Hypothesis is target which you want to classify

- Total Probability (Prior) of Yes and No is already calculated

- Post / Posterior is when you start studying data

- Based on max probability of hypotheses classify given instance into a class

It predicts a class label by computing:

Mathematical Foundation

Mathematical Foundations for Machine Learning #

Machine Learning is built on mathematical principles that allow models to:

- represent data

- learn patterns

- optimise performance

flowchart LR

DATA[Data]

MATH[Math Models]

OPT[Optimisation]

MODEL[Trained Model]

DATA --> MATH

MATH --> OPT

OPT --> MODEL

ML requires core mathematical tools to understand how ML algorithms work internally. Algebra deals with relationships between variables and quantities, while Calculus focuses on change and optimization.

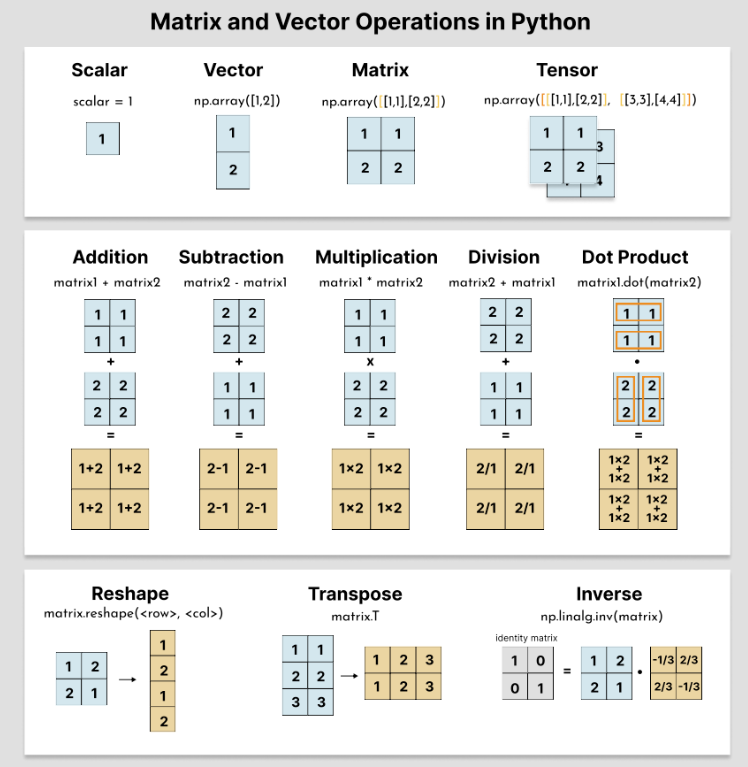

Linear Algebra

Linear Algebra #

The study of vectors and matrices is called Linear Algebra.

Linear Algebra provides the mathematical language used to represent data, transformations, and structure in ML.

Why Linear Algebra Matters in ML #

- Every machine learning model uses matrices

- All data in ML is represented using vectors and matrices

- Neural networks are pipelines of matrix operations

- Models apply matrix transformations to data

- Optimisation relies on linear algebra operations

What to Learn #

- Scalars, vectors, and matrices

- Vector operations (addition, dot product)

- Matrix multiplication (critical)

- Identity matrices and transpose

- Eigenvalues and eigenvectors (conceptual understanding)

- Scalar → a number

- Vector → a directed point

- Matrix → a space transformer

- Linear transformation → structured mapping

- Feature → one axis

- Feature space → where data lives

- Vector space → where vectors live

Linear Systems

Linear Systems #

How systems of linear equations are represented and solved using matrices.

- the study of vectors and rules to manipulate vectors

- describe multiple linear equations solved simultaneously

- connect algebraic equations with matrix representations

Idea of Closure #

performing a specific operation (like addition or multiplication) on members of a set always produces a result that belongs to the same set

idea of closure is fundamental to defining a Vector space because it ensures that performing arithmetic operations (addition and scalar multiplication) on vectors within a set does not produce a new element outside that set.

Systems of Linear Equations

Systems of Linear Equations #

A system of linear equations can be written compactly as:

\[ A\mathbf{x}=\mathbf{b} \]

This represents:

- a linear transformation applied to an unknown vector (\mathbf{x})

- producing an output vector (\mathbf{b})

Key components #

Coefficient matrix (A) #

(A) contains the coefficients of the variables.

Matrices

Matrices #

Matrices are the core data structure of linear algebra and the workhorse of machine learning.

Almost every ML model can be described as a sequence of matrix operations.

Matrix #

A matrix is a rectangular array of numbers arranged in rows and columns.

\[ A \in \mathbb{R}^{m \times n} \]

An ( m \times n ) matrix has:

Solving Linear Systems

Solving Linear Systems #

Solve using:

- Substitution Method

- Elimination Method (Multiple & then Subtract)

- Cross Multiplication

Linear system can have:

- no solution

- a unique solution

- infinitely many solutions

Positive Definite Matrices #

A square matrix is positive definite if pre-multiplying and post-multiplying it by the same vector always gives a positive number as a result, independently of how we choose the vector.

Positive definite symmetric matrices have the property that all their eigenvalues are positive.

Forward and Backward Substitution

Forward and Backward Substitution #

Forward and backward substitution are efficient algorithms used to solve linear systems when the coefficient matrix is triangular.

They are typically used after:

- Gaussian elimination

- LU decomposition

1. Forward Substitution (Lower Triangular Systems) #

Used to solve:

\[ L\mathbf{x} = \mathbf{b} \]

where (L) is a lower triangular matrix:

Inverse Matrix

Inverse Matrix #

The inverse of a matrix is a matrix that, when multiplied with the original matrix, produces the identity matrix.

A square matrix (A) is invertible if there exists a matrix (A^{-1}) such that:

\[ AA^{-1} = A^{-1}A = I \]

Here: